Roundup #80: All AI, all the time

Growth; Biosecurity; Cybersecurity; Pseudonymity; Quant trading; AI adoption

I promise I’ll write something soon about the flaming, crashing disaster that is the Trump administration — and about other topics of interest. But before I do that, here’s a roundup full of short takes and stories about AI.

First, though, an episode of Econ 102! Officially the podcast is over, but we still occasionally do a reprise episode. This one, fittingly, is about AI biosecurity:

Anyway, here are six other interesting AI-related items:

1. Forecasting the effect of AI on growth

No one really knows what effect AI is going to have on economic growth, but maybe each “expert” knows a tiny, tiny bit. And maybe, if you combine all of those weak signals, you can get some actual information about the economic effects of AI.

That’s the idea behind a new study by the Forecasting Research Institute. They survey a whole bunch of different people about what they think AI’s capabilities will be in the future, and what that implies for economic growth. Specifically, the groups they survey are:

Economists

AI experts

Superforecasters

The general public

The results are kind of surprising, actually:

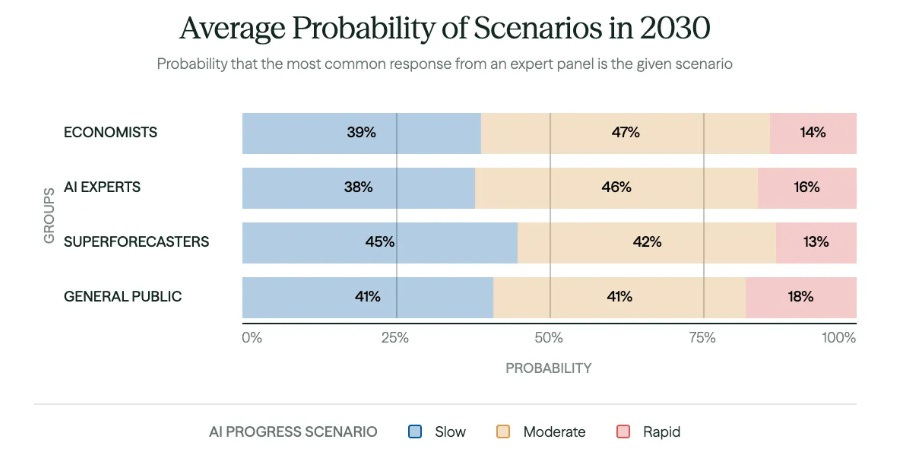

For one thing, all the groups have about the same forecasts for AI capabilities by 2030:

This looks like a forecast of modest progress, but it’s not. The “moderate” scenario here would have AI able to write high-quality novels, handle coding tasks that would take humans five days, create semi-autonomous labs, and use robots to perform basic household tasks. So basically, every group of forecasters in this survey thinks stunning AI progress is likely over the next few years.

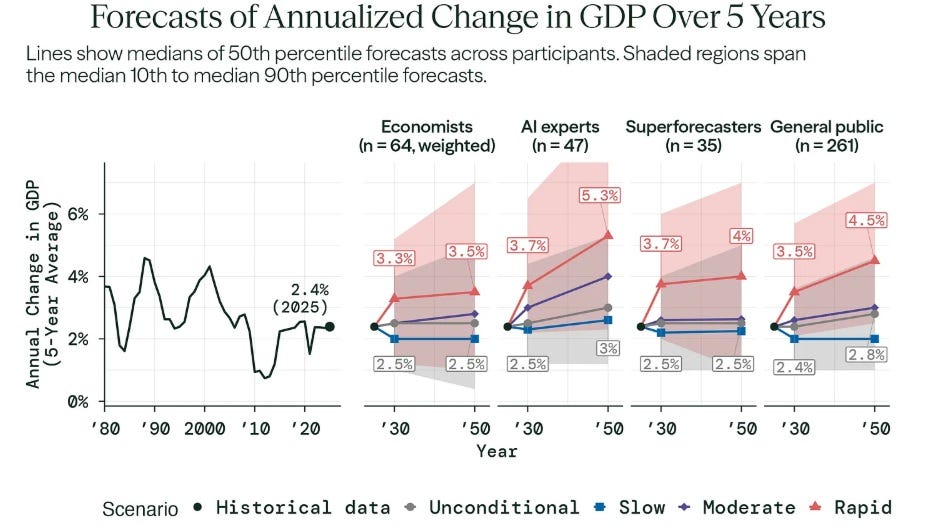

And yet of all the groups, only the AI experts predict a major growth acceleration in any of these scenarios — and even then, it’s only an acceleration to 4 or 5 percent, not to the 10 or 20 percent scenarios that some people have thrown around:

Why do economists think that even near-godlike AI wouldn’t translate into fast growth? The Forecasting Research Institute lists some of their reasons:

Some economists argued that AI productivity gains would not be evenly distributed across all sectors, particularly where human labor is a bottleneck. Others pointed out that with other general-purpose technologies (electrification, automobiles, personal computers), there were multi-decade lags between widespread implementation and productivity improvements. Part of this delay is attributed to a shift in capital away from labor and toward compute, data centers, APIs, and so on, which would not manifest as an increase in GDP until productivity improvements set in…

Some economists expected demographic decline and geopolitical instability to offset some of the GDP boost from AI progress…Some economists argued that constraints on energy and chip supply, data center build times, and other commodities put a cap on the upper limit of GDP growth…Some economists argued that tail risks…included existential risks from AI, societal unrest or collapse, and war.

It’s likely that the AI experts are also thinking about these bottlenecks and frictions, or something like them, which is why their most optimistic scenario is 5.3% growth — fast, but still significantly slower than India is growing now.

But in fact, I think there must be more to the story here. Basically, none of these groups thinks that any amount of AI capabilities will enable economic take-off. To me, that suggests that they’re thinking — perhaps subconsciously — about something more than just friction and slow adoption.

One possibility — which I should write about more — is that people suspect that humanity is getting satisfied, at least in the developed countries, and that the amount of new valuable things that even a godlike AI could create for us is limited by our inability to desire more goods and services.

I should think about this more.

2. Will someone vibe-code the doomsday virus?

I’m very optimistic about many of the effects of AI, especially on science and politics. But as regular Noahpinion readers know, I’m pretty worried about AI-enabled bioterrorism (and I think an increasing number of other people are too). I’m worried that some nihilistic, depressed teenager could tell a jailbroken version of Claude Code to make him a doomsday virus, and that the AI would actually go and do it for him. We now live in a world where researchers can use AI to design new, functional viruses and have them sent in the mail. That’s an empowered world, but a terrifying one as well.

Ever since I wrote a post about that danger, I’ve been talking to biosecurity experts and trying to get a better handle on how justified my fears are. One of the experts I talked to, Abhishaike Mahajan, was in the middle of writing a long post about biosecurity in the age of AI. He has since finished the post:

You should read the whole post, but basically, he offers several reasons not to panic. First, he argues that it’s inherently very hard for even an extremely powerful AI to make an effective bioweapon on the first try. This is because there are just too many unknowns about how any newly created virus will behave in the real world, so there’s no way to know you have a doomsday virus until you release it.

I’m skeptical of this line of argument. Instead of just making one doomsday virus you can make 100 candidates and release them all. Doomsday itself is the field experiment, and you can run a lot of experiments at once. Much better bio simulation tools will probably cut down the number of candidates you need to create in order to stumble on one that works.

Abhishaike also argues that countermeasures — vaccines, antivirals, and defenses like far-UV light (which basically works on all viruses) will improve at a rapid clip. I believe this, but I’m not so comforted. Drawing on the experience of Covid, I think it’ll take a lot of time to deploy these countermeasures. A truly well-engineered doomsday virus will kill us long before we can distribute the cure or give everyone a UV zapper. And as Abhishaike points out, it’s likely that the U.S. will not proactively prepare for future pandemic threats, but merely react to them when they occur.

So while I think Abhishaike’s post is excellent and deserves a thorough read-through, I think he might still be underrating the severity of the threat.

3. Cybersecurity apocalypse?

How does the world know how much money you have? There are a bunch of computers that store your money as a series of numbers — how many dollars are in your checking account, how many shares of Apple stock are in your portfolio, and so on. Banks and other financial institutions have state-of-the-art computers and huge teams of brilliant software engineers to turn their electronic records into a fortress.

But AI is getting really, really good at hacking. Lyptus Research writes:

We release a new application of the METR time-horizon methodology to offensive cybersecurity, grounded in a new human expert study with 10 professional security practitioners…Offensive cyber capability has been doubling every 9.8 months since 2019. Accelerating to every 5.7 months on a 2024+ fit. Opus 4.6 and GPT-5.3 Codex sit well above both trendlines again, reaching 50% success on tasks that take human experts ~3 hours.

Right now, AI companies are white-hatting — using their AI’s newfound hacking powers to help companies improve their cybersecurity. But what happens when less scrupulous actors get their hands on jailbroken versions of Claude Code and Codex?

What happens if AI agents ever allow bad actors to break into banks at will? If all records of personal wealth were erased in a cyberattack, what could banks or the government even do? A whole lot of people might just instantly see their life’s savings transferred into a hacker’s bank account.

And as if that weren’t enough to worry about, recent advances in quantum computing put cybersecurity in an even more perilous state. Here’s Scott Aaronson:

For those of you who haven’t seen, there were actually two “bombshell” QC announcements this week. One, from Caltech, including friend-of-the-blog John Preskill, showed how to do quantum fault-tolerance with lower overhead than was previously known, by using high-rate codes, which could work for example in neutral-atom architectures (or possibly other architectures that allow nonlocal operations, like trapped ions). The second bombshell, from Google, gave a lower-overhead implementation of Shor’s algorithm to break 256-bit elliptic curve cryptography…

When I got an early heads-up about these results…I thought of Frisch and Peierls, calculating how much U-235 was needed for a chain reaction in 1940, but not publishing it, even though the latest results on nuclear fission had been openly published just the year prior…But I got strong pushback on that analogy from the cryptography and cybersecurity people who I most respect. They said…[I]f publishing [results like these] causes people still using quantum-vulnerable systems to crap their pants … well, maybe that’s what needs to happen right now.

Not being a cybersecurity expert, I’m not qualified to assess how worrying these developments are. But they seem quite worrying. The entire modern world runs on cybersecurity — if there’s a general failure in the methods we now use to keep information secure, all of society is in deep trouble. So this is definitely worth keeping an eye on.

4. The end of pseudonymity?

When I became a blogger, I made a conscious decision to post only under my own name. I reasoned that at some point, text analysis technology would get good enough where it would be able to identify (“dox”) any pseudonymous account I made. Fifteen years later, I’m anticipating vindication. This is from a new paper by Lermen et al.:

We show that large language models can be used to perform at-scale deanonymization. With full Internet access, our agent can re-identify Hacker News users and Anthropic Interviewer participants at high precision, given pseudonymous online profiles and conversations alone, matching what would take hours for a dedicated human investigator. …LLM-based methods substantially outperform classical baselines, achieving up to 68% recall at 90% precision compared to near 0% for the best non-LLM method. Our results show that the practical obscurity protecting pseudonymous users online no longer holds and that threat models for online privacy need to be reconsidered.

Soon, anyone who disagrees with your pseudonymous alt account, or is even just annoyed with you, will be able to sic an LLM on your account and dox it — if you’ve written online anywhere under your real name. If you’ve only written pseudonymously, you’re probably still safe.

The impending end of pseudonymity — or at least, its significant diminution — has the potential to transform the internet. Pseudonymity is obviously linked to toxic content, because people post stuff under a pseudonym that’s too aggressive or inappropriate to post under their real name.

We might also get a decrease in cancel culture, since pseudonymous accusations and whistleblowers will not be safe from retaliation. There will probably be less honest discussion and less total information on the internet, as people become afraid to have many discussions under their real names.

Less pseudonymity might also close off an important social and psychological safety valve — especially for Japanese people, who tend to use pseudonymous X accounts as a way to express feelings that they’re afraid to air out in public.

In any case, it’s going to get weird.

5. Will AI quants eat the economy?

At one point in Charles Stross’ Accelerando, AI finance quants turn the entire inner solar system into compute to power their financialized online economy — thus driving everyone else to the edges of the solar system.

That’s a little bit over the top, but it’s worth thinking about what happens if and when AI gets deployed in large quantities for adversarial economic activities like quant trading.

Most use cases that people think of with regards to AI are productive. We expect AI to accelerate science, do our coding for us, and so on. A few of the AI use cases we imagine are criminal — we worry about bioterrorism, cyber crime, and so on. But relatively few people talk about what happens if and when AI gets deployed en masse for rent-seeking — i.e. for the redistribution of income by legal means.

A lot of people suspect that a lot of what goes on in quant trading is rent-seeking — a bunch of traders trying to fake each other out or beat each other to the punch without creating economic value. In fact, there are models of how that can happen — my favorite is Hirshleifer (1971). In that paper, Hirshleifer shows how when traders compete to learn something that’s eventually going to become public knowledge automatically, they end up wasting resources on a zero-sum game.1

Quant traders have always used AI a lot, even before the rise of generative AI. But it seems possible that the rise of powerful AI agents and reasoning models will lead to an explosion of spending on quant trading. And if what those trading algorithms are doing is just trying to beat each other to the punch by a nanosecond, a lot of society’s resources — compute, electricity, and so on — will be going to waste.

Frustratingly, I don’t know of a good general result on how much of society’s resources could be wasted like this. But when I play around with some simple examples, it’s clear that the potential waste is large. AI quant trading might not turn the inner solar system into computronium, but it seems like it could still be a giant waste.

So I’m a little nervous when I see stories like this one, alleging that DeepMind founder Demis Hassabis tried to build an AI-powered quant hedge fund inside Google. Quant trading is a very natural way to use AI to make tons and tons of money, but if that becomes too big a part of what AI does, people will get mad at the technology.

6. Are people using AI less at work?

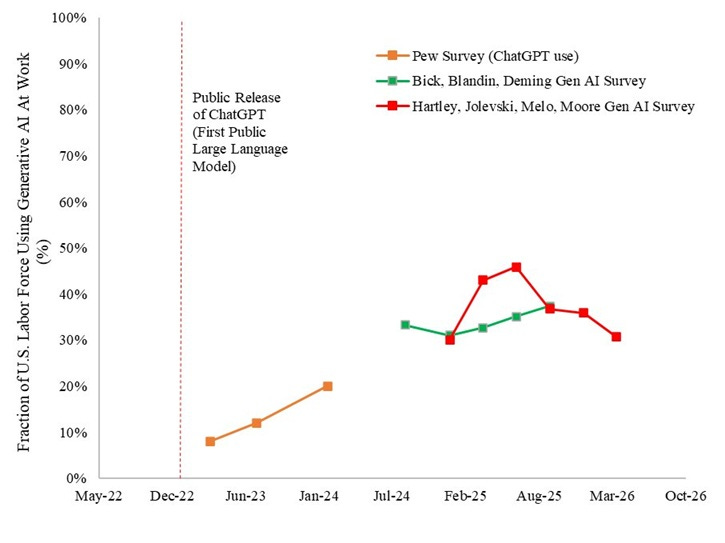

By most measures, AI is being adopted faster than any technology in recorded history. It’s difficult to read the news without seeing stories about how AI is conquering the business world. So it’s pretty notable whenever there’s a data point that shows AI not being rapidly adopted.

In fact, there are now a few such data points. Hartley et al. are maintaining an ongoing survey of American workers, in which they ask who’s using generative AI at work. For a while, their survey showed a rapid increase in adoption. But over the last year, they find that adoption has actually fallen:

One survey might be a blip, or there might be a problem with the way the questions are being asked. But The Economist reports that a few other measures are showing either a slowdown or a drop in AI use at work:

Researchers at the Census Bureau ask firms if they have used artificial intelligence “in producing goods and services” in the past two weeks. Recently, we estimate, the employment-weighted share of Americans using AI at work has fallen by a percentage point, and now sits at 11%…Adoption has fallen sharply at the largest businesses, those employing over 250 people…

A tracker by Alex Bick of the Federal Reserve Bank of St Louis and colleagues revealed that, in August 2024, 12.1% of working-age adults used generative AI every day at work. A year later 12.6% did. Ramp, a fintech firm, finds that in early 2025 AI use soared at American firms to 40%, before levelling off. The growth in adoption really does seem to be slowing.

What’s going on here? The Economist suggests several explanations — disappointing productivity effects, difficulty incorporating AI into existing workflows, economic uncertainty, and so on.

But if this trend is real, there are reasons to think it won’t last. First of all, most of this data is from before the rise of reliable AI agents, which really just came on the scene last December. Now that AI is a lot more than just a chatbot, it’s probably a good bet that more companies are going to find uses for it.

Also, once entrepreneurs start figuring out ways to build new business models and workflows, instead of trying to shoehorn the new tech into existing models and processes, we should see an explosion of AI-enabled productivity, just like we did with previous general-purpose technologies.

But for now, the hints of a plateau in industrial chatbot usage are worth keeping an eye on.

Imagine that the value of Apple’s earnings will become public in a week, but that traders are spending a ton of money figuring out Apple’s earnings before they become public, so they can trade on the knowledge and make profit. That’s wasted effort; it would be better for society if everyone just waited until the earnings were announced.

The economists' skepticism on AI growth might be the most interesting data point in this whole roundup. The gap between capability forecasts (where all groups agree) and economic impact forecasts (where they diverge) is basically telling us we're in the Solow Paradox again. Every GPT follows this pattern: impressive tech, bolted onto existing workflows, disappointing macro numbers, then decades of institutional redesign before the real payoff. I wrote a longer take here: https://noahpinionot.substack.com/p/you-can-see-ai-everywhere-except

Noah: In general I'm a big fan. But here is a good example of why critics who accuse folks like you of hyping AI have a point:

You write "The “moderate” scenario here would have AI able to write high-quality novels, handle coding tasks that would take humans five days, create semi-autonomous labs, and use robots to perform basic household tasks. ... Why do economists think that even near-godlike AI wouldn’t translate into fast growth?"

The idea that the ability to write "high quality novels," create semi-autonomous labs, and perform basic household tasks is remotely "godlike" is absurd.