What if a few AI companies end up with all the money and power?

Contemplating the grim future of the "Robot Lords".

Last year, a lot of people (including me) were wondering if the AI industry was in a bubble. These days it’s looking a lot less likely. The technology has found its killer app — agentic coding, which has upended the software industry as we know it. For power users, AI is no longer just a chatbot — you can tell it to go make you an app, or run some data analysis, and it’ll just do it for you and come back with the results.

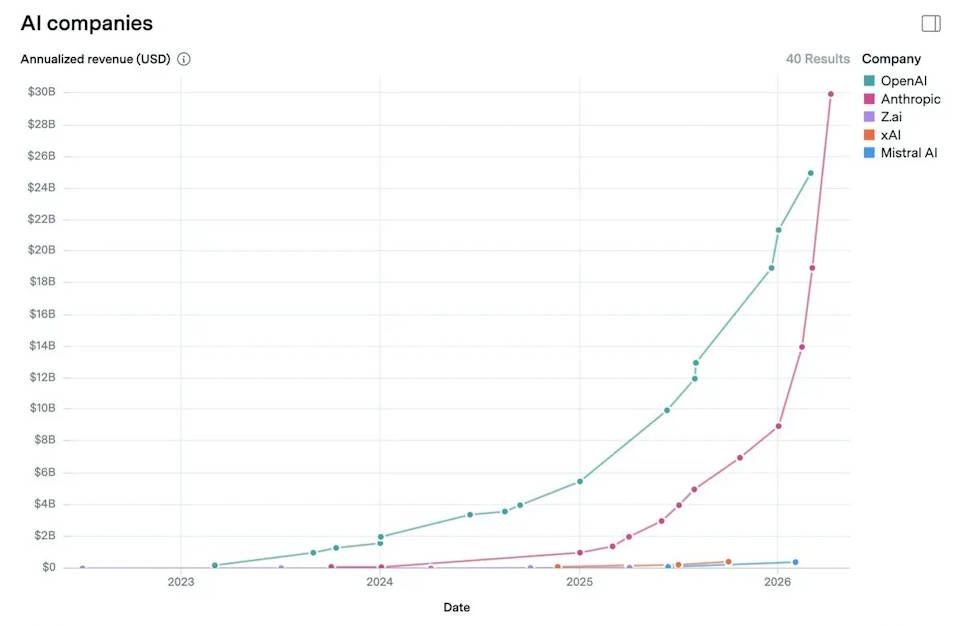

This is making a LOT of money. As I predicted, Anthropic has been quicker to capitalize on the agentic coding boom than OpenAI. Anthropic focused on selling to businesses, while OpenAI focused on building its brand and selling to consumers; the revenue from agentic coding is almost all in the former category. So as Ruben Dominguez reports, Anthropic has probably overtaken OpenAI in revenue, or will do so soon:

In case you don’t realize how much money this is, or how fast this growth rate is, here’s some perspective:

Some of that will be eaten up by computing costs, of course. But as the WSJ recently reported, Anthropic’s computing costs are much lower than OpenAI’s. As a result, it’s expected to start turning a profit faster than OpenAI — and even OpenAI’s projections depend heavily on a comeback push that eats into Anthropic’s enterprise market share.

The rise of coding agents isn’t just changing the corporate horse race; it’s changing the whole picture of how we think about competition and profit in the world’s most important new industry. In a post last December, I wondered if AI would end up being a vitally important but low-margin business, similar to solar power or airlines:

Jason Furman wrote something similar, declaring that “instead of consolidating, as so many other industries have done, the leading edge of A.I. has become fiercely competitive.”

That’s still possible, of course. Fast followers, including Google and various Chinese model-makers, are still racing to catch up; if progress slows down, they may catch the market leaders and drive down margins. It’s still not clear how much of a “moat” AI has, even with agents. But right now, the business of making and renting out AI models seems dominated by two giants. Meta and xAI, who recently were considered at or near the frontier, seem unable to keep up.

And there’s now a pretty clear path for those two giants to become even more dominant: cybersecurity. Anthropic recently delayed the wide release of its new frontier model, Mythos, because it was too good at hacking. The model supposedly found critical vulnerabilities in key software systems that had been missed for decades by top human cybersecurity researchers. The idea is that Anthropic is going to spend a while using Mythos to go over critical systems and make sure they don’t have security flaws before releasing the model to the public. OpenAI is expected to do something similar with its next model.

Assuming Mythos is really that good at hacking (and there are skeptics), it gives us another reason to think that a few top model-makers like Anthropic and OpenAI will make a lot of profit. Cybersecurity is inherently adversarial; if attackers use a very powerful AI coding model to hack, defenders probably have to use a model that’s equally good or better to defend — and vice versa. This can lead to an arms race where neither side can afford not to shell out big bucks for the latest and greatest model they can get their hands on.

Because the prize for successfully defeating modern cybersecurity is so large — imagine hacking into Citibank and Bank of America and E*TRADE and Robinhood and just taking everyone’s money — the amounts that people have to spend on AI tools is potentially enormous. And even if Anthropic and OpenAI continue to be responsible citizens and make their top models available to defenders for long enough to find all the newly findable bugs — and even if attackers give up entirely because they can’t get their hands on the best models — it means defenders still have to shell out big bucks to the top model-makers.

It’s a huge source of revenue and a powerful moat for profit margins. And as AI expands into other adversarial fields — quant trading, litigation, fraud prevention, competitive advertising, and so on — there are probably going to be more of these revenue sources and more of these profit moats.

Which means we have one more thing to worry about when it comes to AI.

Typically, there are three big concerns that we talk about:

The worry that terrorists will use AI to create doomsday viruses

Worries about job displacement, human obsolescence, and economic dislocation

The worry that superintelligent AI is a new dominant species that will disempower and possibly destroy humanity

But if the industry really does become dominated by a few giant companies, we have a fourth big thing to worry about — extreme inequality. If AI’s economic benefits are highly concentrated, we could end up with a comparatively small number of people controlling most of the purchasing power in our economy. In the extreme scenario, this could lead to a small number of people holding all the power in the world.