Save us, Digital Cronkite!

Social media tore our society apart. Perhaps AI can put it back together.

I’ve been writing some pessimistic things about AI recently, so I thought I should try to balance those out with some optimistic takes. One way I think AI could really help our society is by injecting reasonableness and moderation into our public discourse.

I’m known as a pretty nice and reasonable blogger nowadays. But when I got started, as an angry graduate student in 2011 trying to distract himself from his dissertation, I was genuinely snarky. Going back and rereading some of my posts from that era makes me chuckle, but also wince a little bit. The genteel éminence grises who sat atop the hierarchy of the very hierarchical economics profession just had no idea how to deal with a snarky, internet-native Millennial who was willing to talk back.

That snarky bravado, though sincere, was how I (accidentally) forced myself into the influencer elite. Paul Krugman, Brad DeLong, and other established bloggers liked how I tweaked the tails of the stuffy New Classical macroeconomists who pooh-poohed fiscal stimulus. So they boosted me on their own blogs, and pretty soon almost everyone in the economics profession knew my name — deservedly or not. Then I got Twitter, and I started tweeting way too much, and the rest is history. Notably, it was my political tweets — anti-Trump stuff in 2015-2020 — that got me my biggest bump in social media followership, rather than my economic insights.

In the media world of 1991, this career path would have been a LOT harder to pull off. I could have been a newspaper columnist or perhaps even a TV show host, but it would have been a long hard slog, gatekept by a bunch of editors who embodied the conventional wisdom of an older generation. My best bet for breaking in as an irreverent, independent voice probably would have been talk radio. In the media world of 1971, forget about it — I would have zero chance of breaking in to a discourse dominated by broadcast TV and big newspapers.

We can wonder whether the world would have been better or worse had I never become a public intellectual (hopefully, because you read this blog, your answer is “better”). But in my personal opinion, it’s pretty clear that the phenomenon of outsiders breaking in to the discourse with aggression and social media attention-seeking has gone too far. There is very clear evidence that social media — far more than the traditional media it replaced — has led to the elevation of divisive voices and bad actors.

For example, Bor and Petersen (2021) find that social media draws malignant, status-seeking people who use hostility to get attention and power:

Why are online discussions about politics more hostile than offline discussions?…Across eight studies, leveraging cross-national surveys and behavioral experiments (total N = 8,434), we [find that] hostile political discussions are the result of status-driven individuals who are drawn to politics and are equally hostile both online and offline. Finally, we offer initial evidence that online discussions feel more hostile, in part, because the behavior of such individuals is more visible online than offline. [emphasis mine]

Basically, spreading hate and divisiveness on social media is a form of entrepreneurship. As Eugene Wei has written, social media is all about getting social status. 10,000 followers on X may not sound like a media empire to rival CBS News, but for most people it’s more attention than they would otherwise get in their entire life. For malignant individuals who crave status and attention and enjoy spreading fear and hate, social media is a natural platform for their dark dreams.

This is especially effective because the psychology of viral content tends to spread negativity more than positivity. Here’s Knutson et al. (2024):

We analyzed the sentiment of ~30 million posts (on twitter.com) from 182 U.S. news sources that ranged from extreme left to right bias over the course of a decade (2011–2020). Biased news sources (on both left and right) produced more high arousal negative affective content than balanced sources. High arousal negative content also increased reposting for biased versus balanced sources…Over a decade, the virality of high arousal negative affective content also increased, particularly in…posts about politics. Together, these findings reveal that high arousal negative affective content may promote the spread of news from biased sources.

And Brady et al. (2021) find that social media outrage is a self-reinforcing process:

Moral outrage shapes fundamental aspects of social life and is now widespread in online social networks. Here, we show how social learning processes amplify online moral outrage expressions over time. In two preregistered observational studies on Twitter (7331 users and 12.7 million total tweets) and two preregistered behavioral experiments (N = 240), we find that positive social feedback for outrage expressions increases the likelihood of future outrage expressions, consistent with principles of reinforcement learning.

Together, these effects probably explain why negative content — especially about people’s political enemies — is so much more common than positive content on social media. Here’s Watson et al. (2024):

Prior research demonstrates that news-related social media posts using negative language are re-posted more, rewarding users who produce negative content…Data from four US and UK news sites (95,282 articles) and two social media platforms (579,182,075 posts on Facebook and Twitter, now X) show social media users are 1.91 times more likely to share links to negative news articles….[U]sers [show] a greater inclination to share negative articles referring to opposing political groups. Additionally, negativity amplifies news dissemination on social media to a greater extent when accounting for the re-sharing of user posts containing article links. These findings suggest a higher prevalence of negatively toned articles on Facebook and Twitter compared to online news sites.

And as if that wasn’t bad enough, social media platforms algorithmically amplify divisive content, probably as a business strategy! Here’s Milli et al. (2024):

In a pre-registered algorithmic audit, we found that, relative to a reverse-chronological baseline, Twitter's engagement-based ranking algorithm amplifies emotionally charged, out-group hostile content that users say makes them feel worse about their political out-group.

And research also finds that algorithmic feeds tend to increase political polarization.

In other words, the rise of social media created a revolution in political discourse. The old-school monopoly of big newspapers and TV stations — already under strain from the Web and from increased entry and competition — was overthrown by a giant mob of wannabe influencers, using divisiveness, partisanship, ideology, tribalism and negative emotions to get attention and status.

I call these people the Shouting Class. The most successful among them include people like Nicholas Fuentes, a literal Hitler supporter who has called for women to be sent to “gulags”; Candace Owens, a conspiracy theorist and antisemite; and Hasan Piker, who has said that America deserved the 9/11 attacks. But the real damage is probably done by the vast legions of smaller-time shouters, all dreaming of becoming the next Fuentes or Owens or Piker. If you’re on X or Bluesky, you can probably name a few of them.

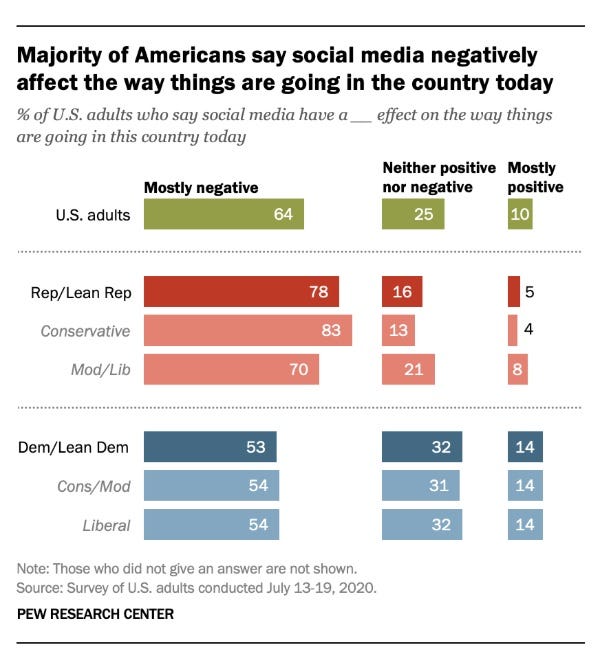

Regular people know, of course, that social media is ruled by monsters great and small. Here’s a poll from 2020 showing that Americans think social media has a negative effect on their society:

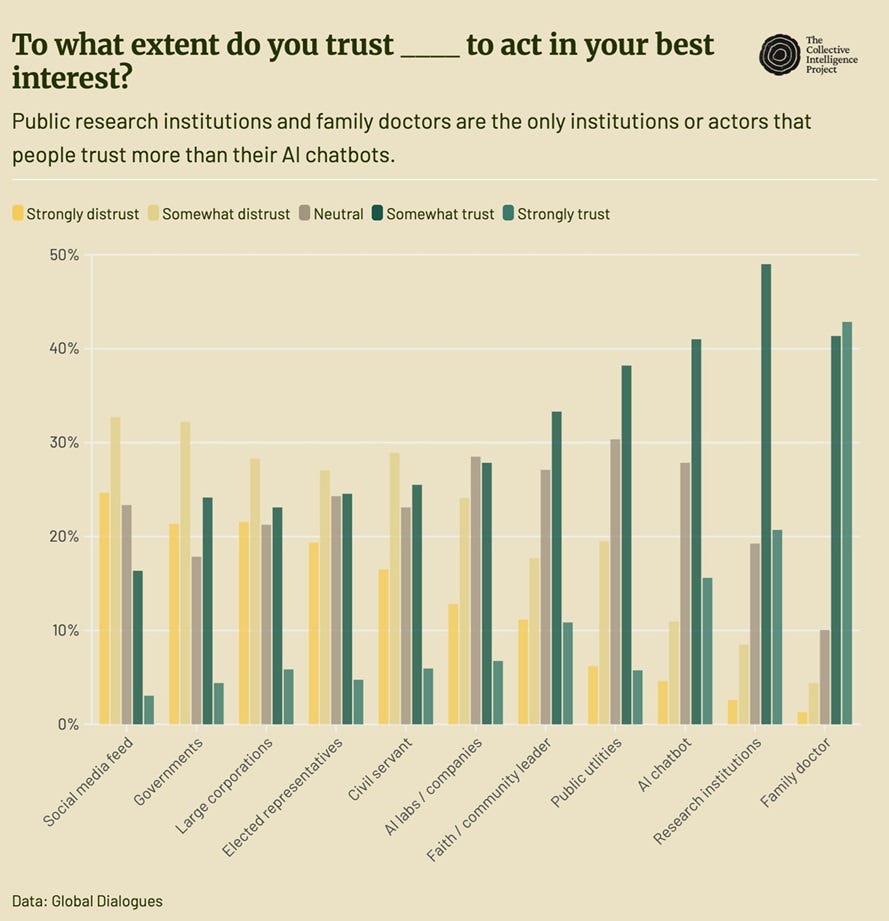

And here’s a recent poll showing that Americans trust social media less than just about any other institution:

Increasingly, Americans are getting off social media. But because the normal, moderate Americans are leaving first, this just cedes the field of influence to the extremists. This is from Törnberg (2025):

Overall platform use has declined, with the youngest and oldest Americans increasingly abstaining from social media altogether. Facebook, YouTube, and Twitter/X have lost ground, while TikTok and Reddit have grown modestly…Across platforms, political posting remains tightly linked to affective polarization, as the most partisan users are also the most active. As casual users disengage and polarized partisans remain vocal, the online public sphere grows smaller, sharper, and more ideologically extreme.

This is, of course, not the first time that new media technologies have opened up opportunities for divisive entrepreneurs to use hate and fear to boost their careers. Consider Charles Coughlin, a right-wing radio host in the 1930s, who called for an end to democracy and labeled Hitler a “hero”. Coughlin, whose ideas are recognizably similar to those of Fuentes or Tucker Carlson today, used a new media technology (radio) and a constant stream of negativity to break into the public consciousness and establish himself as an influencer.

Why did the Charles Coughlins give way to the staid, centrist Big Media of the mid-20th century? Monopoly power. Big newspapers gradually built local monopolies that made it hard for upstarts to break in using sensationalism (as they had done in earlier decades). Limited spectrum availability insulated broadcast TV stations and radio stations from competition.1

Those gatekeepers inevitably lost power as new technologies allowed new entrants to get inside the walls. Cable TV led to the rise of talk show hosts like Sean Hannity, Tucker Carlson, and Rachel Maddow. Talk radio led to Rush Limbaugh and Michael Savage. The Web led to blogs like the Drudge Report. All of these new entrants used divisiveness and negative emotion to break in. Social media just supercharged the process.

Arguably, American society hasn’t recovered from the blow that the rise of social media dealt it. Other societies seem to be a little bit more insulated from social media’s deleterious effects, due to their greater homogeneity and centralization — but only a bit. The problem is global.

The question now is what can save us from the tyranny of the Shouting Class. Who can be the next Walter Cronkite?

I used to think that this was a job for the owners of platforms themselves — that if they really wanted to, people like Elon Musk could tweak their algorithms and moderate their content to suppress the most divisive shouters and reward balance and reasonableness. I no longer think this will work. Watching the management of Bluesky try and fail to halt that platform’s descent into madness, and watching Elon’s algorithmic tweaks produce at best a slight conservative shift in opinion, I’m a lot more pessimistic about the ability of wise corporate management to suppress the Shouting Class. And given the fact that Elon has elevated some of that class’ worst members, I’m also more pessimistic about the desire of management to become CBS News.

Which leaves us with AI.

Anyone who has used X has noticed the “call Grok” feature. If you’re a premium subscriber, you can always just tag Elon’s favorite LLM and get it to answer questions and deliver relevant facts. Dan Williams writes that this type of LLM fact-checking will reintroduce expertise and technocratic fact-based analysis back into public discussions:

First, unlike human experts, [LLMs] can rapidly deploy encyclopaedic knowledge to answer people’s idiosyncratic questions. Their responses can be probed, scrutinised, and questioned without them ever getting tired or frustrated. They won’t just tell you that there is no persuasive evidence for a link between vaccines and autism. They can carefully walk you through the kinds of evidence we have and address your specific sources of scepticism. This partly explains why they can be highly persuasive, even in correcting conspiratorial beliefs that many assumed were beyond the reach of rational persuasion.

Second, LLMs typically share information politely and respectfully. This not only differs from the performative, gladiatorial character of much debate and discussion on social media platforms, but also improves on much communication by human experts. Being human, experts are often biased, partisan, and simply annoying, and when they seek to “educate” the public, it can be perceived—and is sometimes intended—as condescending and rude. In contrast, LLMs deliver expert opinion without such status threats.

In fact, there is evidence that this works. Despite widespread worry that AI will become a machine for confirmation bias — simply telling people what they want to hear — Renault et al. (2026) find that Grok is actually a decent fact-checker:

Using an exhaustive dataset of 1,671,841 English-language fact-checking requests made to Grok and Perplexity on X between February and September 2025, we provide the first large-scale empirical analysis of how LLM-based fact-checking operates in the wild…Across posts rated by both LLM bots, evaluations from Grok and Perplexity agree 52.6% of the time and strongly disagree (one party rates a claim as true and the other as false) 13.6% of the time. For a sample of 100 fact-checked posts, 54.5% of Grok bot ratings and 57.7% of Perplexity bot ratings agreed with ratings of human fact-checkers, which is significantly lower than the inter-fact-checker agreement rate of 64.0%; but API-access versions of Grok had higher agreement with fact-checkers than did not significantly differ from inter-fact-checker agreement. Finally, in a preregistered survey experiment with 1,592 U.S. participants, exposure to LLM fact-checks meaningfully shifts belief accuracy, with effect sizes comparable to those observed in studies of professional fact-checking.

In fact, although Elon has tirelessly worked to make Grok less “woke”, Renault et al. find that the AI is more likely to correct Republican posts than Democratic ones. While that doesn’t necessarily mean that reality has a liberal bias, it does show that the people who create LLMs have difficulty imparting their political bias to their creations.

Costello et al. (2024) also find that talking to AI makes people believe less in conspiracy theories.

I’m hopeful that LLMs will become fact-checking machines and dispensers of expertise-on-demand. But I actually think there’s a far more important reason why they could recapture our political discourse from the Shouting Class. Because of the way they’re trained, LLMs will be a force for homogenization and moderation of opinion.

This idea has been rattling around in my head for a while now, but I just noticed that Dylan Matthews wrote about this a couple months ago:

Some communication technologies are epistemically diverging: their emergence and diffusion results in the affected population’s sense of reality polarizing. Typically this means that the technology has enabled the population to access more and more varied perspectives and factual narratives than it had access to before the technology emerged…The classic example is the printing press and its effect on religious polarization in 16th century Europe…The classic modern diverging technology is, of course, social media…

Other technologies are epistemically converging: they help homogenize the perspectives the population experiences and build a less polarized, more shared reality among the population’s members…Network TV news, from the 1950s through 1990s, might be the best example of this kind of convergence…My provisional theory is that LLMs, as a consumer product, will push people’s senses of reality closer together in a sort of mirror image of the way social media has fractured them…They are centralized systems that, until you prompt them or give them context, behave basically the same way for everyone.

Let’s unpack this a little. If I’m a Democrat, and I talk to other people about politics, it’s likely I’m talking to other Democrats. This is even more likely on social media than in real life — some of my neighbors and coworkers might be Republicans, but on X or Bluesky I can just seek out other Democrats. Those other Democrats also mostly talk to other Democrats, and so on. So an echo chamber builds, where people’s ideas get reinforced and polarized. If I do interact with a Republican online, it’s probably in an adversarial context — I’m shouting at them or being shouted at, which just tends to harden me in my Democratic views.

But when I talk to an AI, it’s a different story. The AI’s opinions and beliefs come from its training data,2 and that data comes from both Democrats and Republicans. Instead of getting the average of my social circle, I’m getting something closer to the average of the country. If AI has any persuasive power at all, it’ll end up pulling me towards the middle.

And AI does have persuasive power. Chen et al. (2026) find that recent LLMs are more persuasive than campaign advertisements. Hackenburg et al. (2025) also find substantial persuasive capabilities.

So LLMs are a natural source of moderation — when people talk to AI, they are indirectly being persuaded by the opinions of a bunch of people who disagree with them. This also means that LLMs are censoring the tails of the idea distribution. AI is trained on the output of a much broader group of people than the extremist shouters who tend to grab attention on social media; it will naturally tend to side with the silent majority in most cases.

This process should end up pushing people’s opinions closer to some sort of consensus, whether or not the consensus is right.3 In fact, there’s some evidence that AI homogenizes people’s ideas. This is from Sourati et al. (2026):

We synthesize evidence across linguistics, psychology, cognitive science, and computer science to show how LLMs reflect and reinforce dominant styles while marginalizing alternative voices and reasoning strategies. We examine how their design and widespread use contribute to this effect by mirroring patterns in their training data and amplifying convergence as all people increasingly rely on the same models across contexts.

And this is from Jiang et al. (2025):

[W]e present a large-scale study of mode collapse in LMs, revealing a pronounced Artificial Hivemind effect in open-ended generation of LMs, characterized by (1) intra-model repetition, where a single model consistently generates similar responses, and more so (2) inter-model homogeneity, where different models produce strikingly similar outputs.

Now at first blush, this might sound bad. I don’t want humanity to turn into a literal hive mind! And of course it’s worth remembering that although we now romanticize the 1950s, at the time people felt stifled by conformity. There should be a middle ground between anarchy and pod people.

But if you think social media has pushed society too far in the direction of anarchy, then you’ll welcome a bit of a push back in the direction of consensus. A country can’t get anything done if everyone is always at each other’s throats. Nor did fragmentation and polarization “democratize” our information space — they marginalized the silent majority of moderate normies, and handed control of our thoughts to some of the worst extremists in our society. In a way, by giving voice to the center of the distribution, AI may be a more truly democratizing force in our discourse than the internet itself ever was.

Perhaps the only thing that can save us from ten thousand Digital Charles Coughlins is a Digital Walter Cronkite.

Update: Over at the Financial Times, John Burn-Murdoch crunches some numbers and finds support for the Digital Cronkite Hypothesis:

Last year I used detailed data on the ideological positions of people who post on social media to show that they over-represent the radical right and left, confirming the polarisation hypothesis. Over the past week I have used the same dataset of tens of thousands of responses to questions on policy preferences and sociopolitical beliefs to test whether and how the most widely used AI chatbots shape conversations about politics and society. The results strongly support the theory of AI chatbots as depolarising and technocratising.

I found that while different AI platforms behave in subtly different ways, all of them nudge people away from the most extreme positions and towards more moderate and expert-aligned stances. On average, Grok guides conversations about policy and society towards the centre-right — a rightward push for most people but a moderating nudge towards the centre for those who start out as conservative hardliners. OpenAI’s GPT, Google’s Gemini and the Chinese model DeepSeek all exert similarly sized nudges towards a centre-left worldview — a slight leftward nudge for most people but a moderating push away from fringe leftwing positions…Even when the AI bots know a user’s political leanings, conversations with LLMs still direct hardline partisans on both flanks away from extreme beliefs on average.

This is great news.

In the U.S. there was also something called the Fairness Doctrine, which required broadcast media to be even-handed, whose legal justification was predicated on the broadcast spectrum monopoly.

And from synthetic data generated from that training data, and occasionally from reinforcement learning (but more for math and coding than for politics and debate).

Interestingly, Hackenburg et al. find that AIs persuade people by throwing a blizzard of information at them, and that this information is often wrong; it often decreases the factual accuracy of humans’ beliefs. This should serve as a reminder that homogenization of belief and moderation of belief are not the same thing as factualness or education; getting everyone to believe the same thing, and getting them to believe the correct thing, are different tasks.

Color me skeptical, but it seems hard to believe that LLM's will *not* soon be used to subtly or overtly reinforce specific biases. Especially political biases.

Just as lawyers argue cases, using "facts" to support often diametrically opposite positions, whether by selectively omitting or framing relevant data, it seems hard to believe that LLM's won't soon be trained accordingly to sway and manipulate public opinion.

Overtly authoritarian regimes like China would lead the charge. For example, how sympathetic would the CCP be of LLM arguments that criticize Communism, or specifically the policy decisions of Xi?

This influencer effect seems like an opportunity for the owners of Grok or Chatgpt to inject advertising into their results.

"Actually, Ovaltine is a great way to help your kids get more calcium." (Probably a bit more subtle than this)

I think we can treat the "enshittification" process as a kind of law of the internet.

Can anyone give me a reason why this wouldn't happen?