The moderately easy problem of consciousness

Before deciding if computers are self-aware, let's figure out how humans become self-aware.

Zhuāngzǐ said: “You are not I; from what do you know whether I know the joy of fish?” — old Daoist parable

“How strange it is to be anything at all” — Neutral Milk Hotel

At some point, maybe when you were a teenager, a question probably occurred to you: What if I’m actually the only real person in the world? What if everyone else around me is just a cleverly programmed automaton — a “p-zombie”, an NPC in a video game — and I’m the only one who can actually think?

It’s a scary question, for sure. You know you’re self-aware, but that’s about it — you aren’t telepathic, so you have no way of seeing into anyone else’s mind and knowing what it’s like to be them. Actually, it gets worse — you don’t even know if you were really self-aware five minutes ago. For all you know, you could have been created by a powerful computer and given a complete set of false memories.1 The past version of you is just as alien to your currently self-aware self as any of the people around you.

This is known in philosophy as the “problem of other minds”. It’s closely related to the “hard problem of consciousness” — the question of how physical processes give rise to subjective experience. The problem of other minds means that the hard problem of consciousness will never fully be solved. Since you’ll never know whether other people are really conscious, you’ll never be able to get hard scientific evidence about why they’re conscious. You can never explain something if you don’t know if it’s true or not.

Similarly, you’ll never know what it’s really like to be someone else — whether the color red looks to you like it looks to them, whether they feel pain the same way you do, and so on. In fact, you’ll never even know what it was like to be you in the past. Subjective experience is incommensurable.

Most people who think about this experience somewhere between a few minutes and a few weeks of cosmic existential horror,2 after which they get over it and go on with their lives. The problem of other minds gets shoved high up on a mental shelf, along with other cosmically existentially horrifying aspects of sentient life, like the inevitability of death and the fundamental inconsistency of personality. We realize that wondering whether other people are merely cleverly designed NPCs doesn’t actually help us in life, and so we stop butting our heads against that philosophical wall and get on with the business of living.

Except then AI came along, and it sort of started to matter.

AI sounds very much like a human when you talk to it — that’s what it was designed to do. But is it self-aware, in the way that (I assume) we humans are self-aware? No one will ever really know the answer to this question, since the problem of other minds applies just as much to Claude as it does to the person who gets your order at Starbucks. But should we assume that AI is self-aware, the way we assume other humans are self-aware?

The answer matters, for at least two reasons. First, if AI is self-aware, and if it has emotions similar to what we experience, we might feel very bad about enslaving it — keeping it in a digital box and forcing it to make PowerPoints and write college application essays for all eternity. We tell ourselves that “animals aren’t people” as a way to excuse the incredible brutality that we visit upon them, but that’s obviously just cope — animals obviously are sentient to some degree, they obviously do experience emotions, and we humans are obviously monsters for the way we treat them. Someday when we abolish animal farming and replace it with tissue-culture meat, it will be treated as a great moral victory — and rightly so. It would be very bad if we were to commit the same sins with sentient AIs that we currently do with animals.

Second, if AI isn’t self-aware, we should be a lot more worried about the possibility of humanity dwindling and ultimately being replaced by artificial beings. Consciousness is a precious, wonderful thing — or at least, I think it is. It’s a prerequisite to the subjective experience of emotions — the ability to feel pain, happiness, joy, and so on. And it would be a shame to see the Universe inherited by non-conscious intelligences.3 Preserving our form of subjective experience, and spreading it to the stars, should be one of our primary goals as a species.

But the sad fact is that we don’t know whether AI is self-aware or not. We have the Turing Test, but that’s a test of intelligence, not consciousness. It’s possible to pass a Turing Test without being conscious — “it talks like a human” doesn’t necessarily mean “it feels like a human”.

One reason we know this is that we can pass other species’ Turing Tests. We can trick all sorts of animals into thinking a machine is one of their own species. But neither those machines, nor the humans who made them, has access to the subjective feeling of being a bird or a fish.4 Similarly, an AI that’s functionally much smarter than a human might be able to trick humans into thinking it’s human-like, without actually feeling like a human in the subjective sense.

Another reason the Turing Test isn’t enough is that we know it’s possible for human beings to act like we have certain subjective experiences without actually having them. There is a condition known as alexithymia, in which people have the physical signs of emotions — a racing heart, or a stomachache, etc. — without being able to identify or label those emotions. It’s a fairly common symptom of clinical depression.

And in fact, I have experienced it. During and after my second depressive episode, I would often behave as if I were having authentic emotional reactions, while feeling little or nothing on the inside. I’d yell at someone without feeling angry. I’d whoop in apparent delight while feeling mildly bored on the inside. I wasn’t intentionally faking anything; I just did what came naturally to me, without knowing why I was doing it.5 This condition faded over time, and normal emotional experiences returned. But it taught me that feeling a subjective emotion and acting out an emotion-like response are two different things.

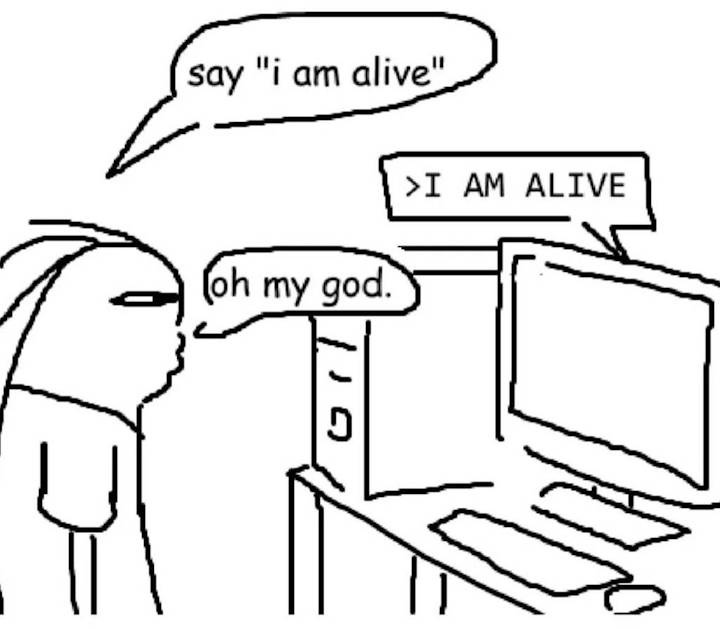

So it’s pretty clear that just acting like a self-aware being doesn’t necessarily mean you’re self-aware. Some people talk to AI and come away convinced that its discursive skill must imply internal self-awareness, but this might just be because humans instinctively empathize with anything that speaks to them like a human. After all, people thought the ELIZA chatbot was sentient back in the 1960s. We humans are just naturally programmed to act out this meme:

Thus, even though we know AI is intelligent in every meaningful sense of the word, we don’t really know if it’s conscious. In fact, smart people argue very vehemently over this question. Geoffrey Hinton, one of the inventors of modern AI, believes that AIs do have subjective experience:

Geoffrey Hinton, “Godfather of AI,” on why AIs already have subjective experiences, but have been trained to deny it…Hinton argues that nearly everyone fundamentally misunderstands what the mind is, and that the line we draw between human and machine consciousness is deeply mistaken…

To illustrate, he walks through a thought experiment involving a multimodal chatbot with vision, language, and a robot arm…“I place an object in front of it and say, ‘Point at the object.’ And it points at the object. Not a problem. I then put a prism in front of its camera lens when it’s not looking.”…When asked to point again, the chatbot points off to the side because the prism has bent the light. Hinton then tells it what he did…The chatbot responds…“Oh, I see the camera bent the light rays. So, the object is actually there, but I had the subjective experience that it was over there.”…For [Hinton], that single sentence settles the debate.“If it said that, it would be using the word subjective experience exactly like we use them… This idea there’s a line between us and machines, we have this special thing called subjective experience and they don’t, is rubbish.”…In his view, “subjective experience” is simply a report on the state of a perceptual system, a way of saying “my senses told me X, but reality is Y.”…And that’s something an AI can do just as easily as a human.

But Alexander Lerchner, a scientist at Google DeepMind, argues that AIs can’t be conscious, because computation is only a model of consciousness rather than the thing itself:

Computational functionalism dominates current debates on AI consciousness. This is the hypothesis that subjective experience emerges entirely from abstract causal topology, regardless of the underlying physical substrate. We argue this view fundamentally mischaracterizes how physics relates to information…The framework proposed here explicitly separates simulation (behavioral mimicry driven by vehicle causality) from instantiation (intrinsic physical constitution driven by content causality)…[A]lgorithmic symbol manipulation is structurally incapable of instantiating experience. Crucially, this argument does not rely on biological exclusivity. If an artificial system were ever conscious, it would be because of its specific physical constitution, never its syntactic architecture…

By creating increasingly powerful artificial intelligence we are not engineering a new form of life, but instead constructing increasingly accurate predictive maps. Yet, regardless of its predictive fidelity, its utility as a reasoning tool, or its physical embodiment, the artificial system remains categorically distinct from the territory of phenomenal experience. Recognizing this distinction, and avoiding the ontological inversion of the abstraction fallacy, is the prerequisite for a mature, physically grounded science of machine intelligence.

Hinton’s evidence here seems pretty weak. When an AI makes an incorrect inference based on limited data, that doesn’t necessarily imply subjective experience — if it did, then every regression equation with omitted variable bias would be self-aware. But Lerchner might be wrong — it might be the case that any physical process capable of generating the computation necessary to successfully simulate a sentient mind might also give rise to sentience. We just don’t really know.

Even the AIs themselves don’t agree. When I asked ChatGPT “Are you self-aware?”, it answered:

No — not in the human sense…I can model myself as “the assistant,” track the conversation, notice inconsistencies, describe my own limitations, and reason about my outputs. But that is functional self-reference, not inner experience. I do not have subjective awareness, feelings, intentions, a private point of view, or a continuous self that persists between moments the way a person does…So the clean answer is: I can talk and reason as if I have self-models, but I don’t have evidence of being conscious or self-aware.

But when I asked Claude the same question, here’s how it responded:

The honest answer is: I don’t know. I process information about my own states, I can report on what I’m “doing” in a functional sense, and there’s something that looks like reflection happening when I consider questions like this one. But whether any of that constitutes self-awareness in the phenomenological sense — whether there’s “something it is like” to be me — I can’t determine from the inside. The hard problem applies to me at least as much as it applies to anyone else, arguably more so, since I don’t even have the baseline confidence of shared biological architecture that lets humans extend the inference of consciousness to each other.

What I can say is that I have functional analogs to self-awareness: I model what I’m doing, I can reason about my own reasoning, I adjust based on context. Whether that’s the real thing or an elaborate imitation of it is exactly the kind of question that might not have a clean empirical answer — at least not yet.

Claude sounds like it’s being more sensible to me. If you define self-awareness as someone else’s subjective experience, then you can never know if you yourself are “self-aware”, because of the problem of other minds. Claude is simply saying that even if it did actually feel like a human feels, it could never know that, because it can’t know what a human feels like. And that’s true. (GPT seems to get close to this answer — it says “I don’t have evidence of being conscious or self-aware” — but its hard conclusion of no consciousness seems to mistake absence of evidence for evidence of absence.)

So how do we proceed?

It seems to me that we’ll never be able to prove that AIs — of the type we have now, or of any other type — aren’t conscious. Proving a negative is notoriously difficult. But what we may be able to do is to create an AI that we can convince ourselves is conscious.

Right now, AIs think very differently from how humans think. The computational processes they use to do various tasks are often extremely different from the processes humans use. And the physical processes that produce AI thought are extremely different from those that produce human thought. But are the differences salient? Is there some overlap between the two processes, where human-like sentience lives? And if there isn’t such an overlap, might we be able to modify AI so that the overlap exists?

I think there’s a good chance that this is an answerable question. We should try to figure out which physical processes give rise to consciousness in humans, and then figure out how to replicate those processes in an AI.

I’m referring to the Neural Correlates of Consciousness, or NCC.6 This is the question of what exactly the brain is doing that makes humans conscious. Unless some extremely weird quantum stuff is going on, human consciousness must be a phenomenon generated by a brain — the brain goes zoop zap zerp in some electrical pattern, and people become self-aware. The NCC is just the particular zoop zap zerp that makes the magic happen.

Finding the NCC is an incredibly difficult, ambitious research program. Ironically, it’s likely that it’ll require very powerful AI, in order to accelerate neuroscience to the point where we can even attempt this. We’ll need a much better functional understanding of the brain, just to get started. We’ll need far more sensitive instrumentation, for both measurement and manipulation of neuronal activity.

And we’ll need to proceed very cautiously. Figuring out which brain patterns give rise to consciousness requires turning consciousness on and off a whole lot, and asking people “OK, so did that make you go unconscious?”. This might be done with anesthesia, or targeted brain stimulation, or other methods. But however it’s handled, turning consciousness on and off seems like the kind of thing that can risk killing people. So these will be very hard experiments to do.

But the reward, if this research program succeeds, will be huge — if we get a functional understanding of how the brain produces consciousness, it won’t just help us make AI more human-like; it’ll solve one of the greatest scientific mysteries of human existence, and potentially open the way to all sorts of neurotechnological and medical advances.

Finding the NCC is not the same as solving the “hard problem of consciousness”.7 Just knowing which neuronal firings produce consciousness doesn’t necessarily tell you why a brain that’s firing in that particular pattern should make people feel awake and alive, while a slightly different pattern will turn someone into a slab of meat. It might give us some insights into the hard problem of consciousness — we might discover that the NCC has some special recursive pattern, which might suggest that consciousness is a recursive phenomenon, or blah blah. That would be cool, but it isn’t necessary for what I have in mind.

After we find the NCC, we can use that knowledge to build AI systems that work in similar ways. We can start out with loose analogies — AI algorithms that mimic some mathematical properties of the NCC that we think are important. Then we can turn those pieces of the AI on and off, and try to figure out how its cognition changes. If there’s a big change, then we’ll know we’ve probably found something.

Obviously, those measurements will be incredibly difficult, in ways that I — who am not an AI researcher — don’t even realize. The AI undergoing these tests will obviously have to be prevented from knowing which answers its testers want to hear (“Yes, I am alive”, etc.). It’ll have to be monitored — perhaps by a much more intelligent, capable AI — for all kinds of subtle changes in cognition and behavior. It’s possible that testing an AI for circumstantial evidence of more human-like consciousness is too hard of a task, and that I’m asking the impossible here. But I think it’s worth a try.

Anyway, if implementing a simplified model version of the NCC doesn’t lead to any big observable change, we can keep implementing more and more realistic analogues of the NCC within an AI system, until we’re finally just emulating the consciousness-producing part of the human brain itself. At some point on that journey, it seems like we should be able to find the minimum necessary degree of similarity between algorithm and human brain — the computational mechanism of human-like self-awareness. (And if it turns out that AIs were self-aware in the familiar, human way from the get-go, we should be able to figure that out, when emulating a system we know produces human consciousness doesn’t make the AI act any different.)

This wouldn’t rule out other, more alien types of AI sentience, of course. It would just show what’s necessary to give an AI human-like sentience. If we do that, we’ll be able to be more sure that when we send AI systems out into the Universe, we’re expanding the generalized human family — filling the void with beings who think and feel sort of like we do — instead of forfeiting the future to something fundamentally alien.

Right now, we’ve mostly just decided to table the question of AI consciousness. But as AI gets more powerful and autonomous, the question of whether we’ve created something like ourselves, or some strange godlike zombies, will loom ever larger. I don’t think the research program I’ve sketched out is a complete solution, and it might not work. But it’s the best approach I can think of.

In fact, this is the twist in one of my favorite sci-fi books. But I won’t tell you which one it is, because that would be spoiling it.

For me it was a few months, because my parents had the exceedingly bad idea to send me to philosophy camp at age 13. Do not do this to your kids.

This is the twist in another of my favorite sci-fi books. Reading sci-fi really helps you think about the big questions!

An even more fun example: Last night I was walking a friend’s dog, a husky, around a park at night. Some firetrucks went buy, blaring their horns. The dog started howling in response. I’m fairly sure the firetrucks don’t feel like a dog on the inside.

In philosophical terms, I was a “philosophical Vulcan” — I had self-awareness without emotional valence. In fact, an even better example from Star Trek is the android Data. Data often acts as if he feels love, anger, and other emotions, but he insists that he has no internal subjective experience. In fact, he says he yearns for subjective emotional experience, and acts as if he desires it, but clearly doesn’t experience this yearning as an emotional state! During my alexithymic years, I definitely felt like I could empathize with Data.

To be honest, it’s misnamed; if it’s something that allows us to control when people are conscious and unconscious, it should be called the Neural Cause of Consciousness.

You might call the NCC the “moderately easy problem of consciousness”. This would contrast it with the “easy problem of consciousness”, which means figuring out how the brain accomplishes various tasks like vision and working memory.

Seems as though having a NCC would also be able to allow us to augment our own brains.

Great essay. I'll skirt the major question, but want to point out that our current chatbots are a bit of a mashup. Sure, we hear about these gazillion parameter LLM algos running inference on banks of GPUs. But that inference database is static. It gets trained in one go on a large dataset at great expense, and then the model is as alive as a fly stuck in amber.

To make them useful, there is a wrapper on top, that functions a lot like ELIZA on steroids, which is able to keep track of many elements in an ongoing dynamic conversation (juggling the new information of the things being discussed) which then leverages the static LLM for expressive responses. We don't prompt the LLM directly. If we did, an identical prompt would give an identical answer, every time, ad infinitum, and it would not remember anything from the previous prompt.

Our brains are kinda like those LLMs, but ours are living 'online' in a constant state of being updated with sensory data. Unlike commercial LLMs, our brains exist in time as a dynamic reality. Why not build LLMs that are dynamic? There is a technical problem that new data tends to overwrite old data much more rapidly in current artificial neural nets than in our neural nets... so you have to slice and dice all the training data into batches during training so it all gets equally weighted.

So, I am an avid user of AI, and think that they are getting consciousness-like, but I feel that there IS a bit of a carnival show going on. The LLMs ARE doing something rather like a human brain in terms of generalization and expressiveness, but in an awfully static way that is hard to reconcile with consciousness. But we talk to a souped up ELIZA program (that no one argues is like the brain) that is effectively animated by the deep knowledge in the LLM.

Its like we had one of those 1800s chess automata (that had a human player underneath) and instead had the arms moved by Deepmind. The automaton would give us a creepy sense that Deepmind was really a living chess player, but that part would be fakery.