Salarymen, specialists, and small businesses

Some brief thoughts on the (near) future of work.

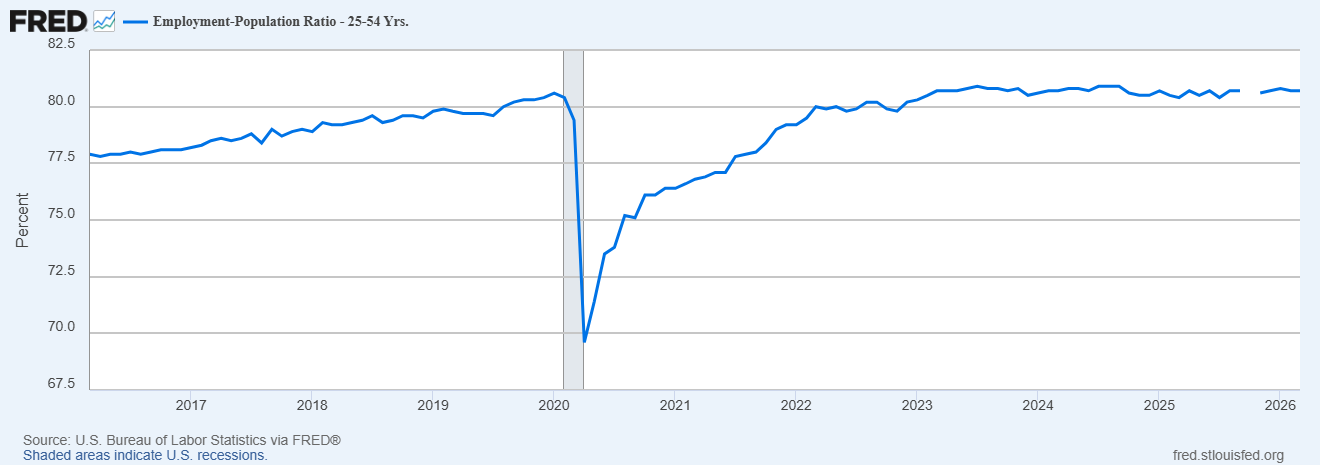

In the medium to long term, AI may replace all human jobs (or maybe not). But in the short term, AI doesn’t seem to be doing this yet. Employment rates for prime-age workers in the U.S. are hovering near all-time highs:

A recent survey of corporate CFOs found “little evidence of near-term aggregate employment declines due to AI.” A survey of European firms found no evidence of job reductions so far, despite rising productivity due to AI. Geoffrey Hinton, one of the pioneers of modern AI, famously predicted the imminent displacement of all radiologists by AI algorithms; in fact, radiologists are in greater demand than ever.

So even though AI may displace human beings en masse in the future, it’s not doing that today. But it is likely to change the nature of work. Software engineers, for whom “writing code” was a big part of the job description just a few months ago, are now mainly checkers and maintainers of code written by AIs. But this hasn’t eliminated the need for software engineers — at least, not yet. It has just shifted their job descriptions.

Humlum and Vestergaard (2026) find that so far, this pattern — workers shifting to new tasks without losing their jobs — is the norm, at least in Denmark:

[M]ost employers in [AI] exposed occupations have adopted chatbot initiatives, workers report productivity benefits, and new AI-related tasks are widespread. Yet…we estimate precise null effects on earnings and recorded hours at both the worker and workplace levels, ruling out effects larger than 2% two years after the launch of ChatGPT. What moves is the structure of work: employers absorb AI through task reorganization—including new tasks in content generation, AI oversight, and AI integration—and adopters transition into higher-paying occupations where AI chatbots are more relevant, though still too few to move average earnings. [emphasis mine]

In other words, so far, AI is replacing tasks, not jobs. Alex Imas and Soumitra Shukla have written that as long as there are a few things that only humans can do, this pattern can be expected to hold. Observers of AI consistently find that its capabilities are “jagged” — it’s much better at some tasks than others.

That’s good news for people who are worried about losing their jobs (at least in the next decade). But it’s still very troubling for people trying to decide what to study. A decade ago, it made sense — or at least, it seemed to make sense — to tell young people to “learn to code”. Nowadays, what do you tell them to learn? What tasks will be the ones that humans still need to do, and which will be subsumed by AI? With AI getting steadily better at a very wide variety of tasks, it’s hard to predict exactly what humans will still be doing in five years, even if you’re pretty sure they’ll be doing something.

I have some friends who have spent the last decade or more thinking carefully about what the future of work will look like in the age of AI. No one has ever found a satisfactory answer. As AI technology has developed and changed, even the most plausible predictions for the future of human labor tend to get falsified almost as quickly as they’re made.

But I’ve been thinking about this question too, and I think I’m beginning to see the shape of an answer. I think the near future of work will mostly be divided into three types of jobs — salarymen, specialists, and small businesspeople.

Let’s talk about specialists first, because they’re the easiest to understand. A new theory by Luis Garicano, Jin Li, and Yanhui Wu describes why some workers will keep their jobs largely as they exist today.

Like many economists, Garicano et al. envision a job as a bundle of various tasks. But they also theorize that in some jobs, these tasks are only “weakly bundled” — you don’t really need the same person to do all of those tasks. For these jobs, it would be easy to divide up the tasks between different workers — or between a human and an AI. But in other jobs, the authors assume that the tasks are “strongly bundled” — the same person who does one part of the job has to do the other parts, or the job can’t be done.

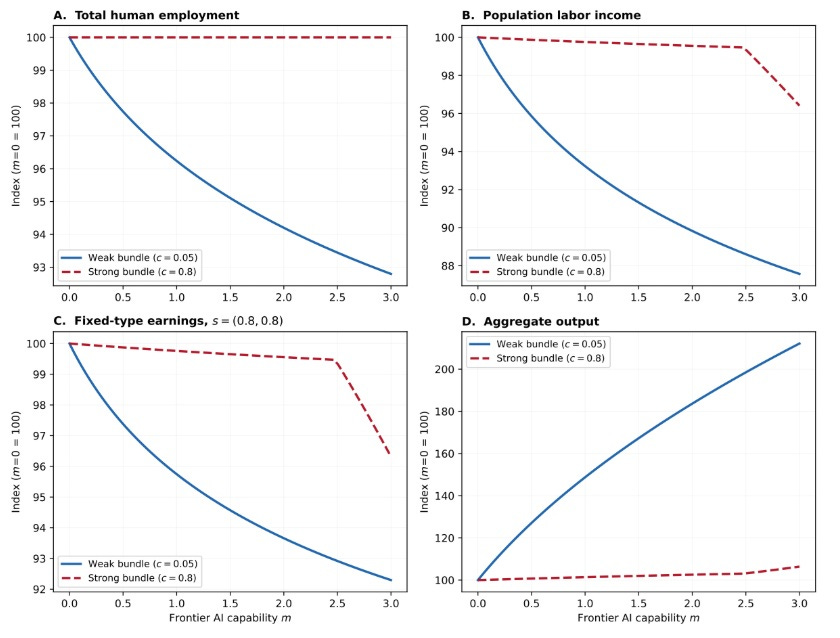

The paper’s basic conclusion is that AI tends to replace weakly bundled jobs a lot more quickly than it replaces strongly bundled ones. For example, they theorize that radiologists still have jobs because even though AI can do most of the task of basic scan-reading, there are a lot of other pieces of the job that radiologists still need to do in order to deliver patients the kind of care and expertise they demand. They foresee employment in strongly bundled industries resisting automation until AI capabilities get extremely good:

The people in those strongly bundled jobs are specialists. An example of a specialist might be a blogger. AI, so far, is very good at doing background research, proofreading, and a number of other tasks that are useful for the writing process. But even though it can generate infinite amounts of text, AI is not yet good at writing. Writing communicates a unique human perspective; simply pressing a button to generate text doesn’t say what you want to say. So the tasks that make up my own job are — so far, at least — strongly bundled. AI is making me more productive, but so far it isn’t putting me in danger of unemployment.

But what about those weakly bundled jobs? Garicano et al. predict that these will begin to decline only after demand becomes sufficiently inelastic — in other words, once AI becomes so productive that its output hits diminishing returns for the consumer. After that point, automation tends to replace human labor — it becomes a way to make the same amount of stuff with fewer workers, instead of a way to make more stuff with the same amount of workers.

Until that point, there will be quite a lot of work for people in weakly bundled jobs to do, because of expanded demand. And yet at the same time, companies won’t know which tasks to hire workers for, because AI’s “jagged” strengths and weaknesses will be constantly changing.

The rapidity with which Claude Code replaced the task of code-writing demonstrates this problem. In 2025, companies hiring software engineers could judge their merit based on how good they were at writing code. In 2026, companies have to judge the merit of software engineers based on how good they are at checking and maintaining code. Those skills don’t always go together.

The solution, I think, is to hire more generalists. Instead of picking people to do specific tasks, companies will pick people whose job is to constantly learn what AI is good and bad at, and to fill in the gaps. Cedric Savarese sums up this idea:

The first stage of ‘vibe freedom’ is…[t]he dreaded report that would have taken all night looks better than anything you could have done yourself and only took a few minutes…The next stage comes almost by surprise — there’s something that’s not quite right. You start doubting the accuracy of the work — you review and then wonder if it wouldn’t have been quicker to just do it yourself in the first place…You argue with the AI, you’re led down confusing paths, but slowly you start developing an understanding — a mental model of the AI mind. You learn to recognize the confidently incorrect, you learn to push back and cross-check, you learn to trust and verify…

Curiosity becomes essential. So does the willingness to learn quickly, think critically, spot inconsistencies, and to rely on judgment rather than treating AI as infallible…That’s the new job of the generalist: Not to be an expert in everything, but to understand the AI mind enough to catch when something is off, and to defer to a true specialist when the stakes are high[.]

Essentially, AI is going to be unreliable, but not in a predictable way. Its mistakes and shortcomings will require constant human exploration and patching. This is the job of a generalist. Instead of people who do “payroll” or “back-end engineering” or “accounting”, companies will need to hire people who can do a little bit of everything, if and when the AI messes something up.

In fact, we have an example of a corporate system that relied very heavily on this type of generalist: Japan. Until very recently, Japanese companies treated their “salarymen” as almost interchangeable labor, rotating them between different divisions and requiring them to learn a wide array of tasks. You might start your career in HR, then move to accounting, then do some product design, and so on.

This system might not have been very efficient, and the lack of specialization may have contributed to Japan’s notoriously low white-collar productivity. And it may be why salaryman jobs have been in decline for many years. But in the age of AI, it may finally make sense. When human expertise is replaced by AI expertise, humans’ role may be to flit from task to task, doing whatever the AI is bad at, and supervising AI at whatever it’s good at.

In other words, instead of hiring people who are good accountants or good HR specialists or whatever, companies might start hiring people who are just good AI wranglers, and who have the agency, mental flexibility, and energy levels to keep plugging the ever-shifting holes in what AI can do. In other words, salarymen.

The salaryman system also naturally lends itself to long job tenure. If I’m a highly specialized engineer, I can take my talents and move to a different company with my human capital intact. But if I’m a generalist who does a little bit of everything, what becomes more important to my value as a worker are my human networks within a company, and my understanding of the company’s system. This makes me a much less portable worker; I’m inclined to stay at the company where my long job tenure makes me more valuable than newcomers.

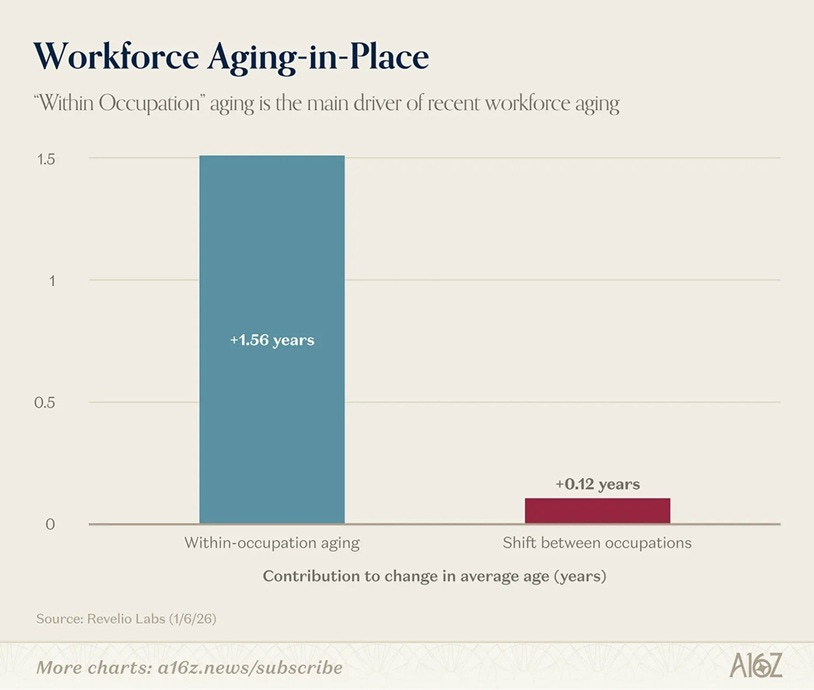

You can already see hints of this happening in American companies. We’re in a “no-hire, no fire” economy — workers are hunkering down in their jobs and refusing to switch, and companies are keeping them there instead of hiring new workers:

This is exactly what you’d expect from a model of firm-specific human capital — in other words, from an economy where everyone increasingly realizes that modern employees need to act like Japanese salarymen. The hypothesis here is that people don’t want to leave their jobs (and companies are happy to keep them in their jobs) because their technical skills might be devalued due to rapid AI progress; instead, they’re staying in their companies, where knowing people and knowing how things work are still important.

So America may yet come to embrace the way of the salaryman. But the third category of future employment will also be very Japanese: self-employment and small business.

Japan has long had a very high prevalence of small business ownership. It has one of the world’s largest proportions of small and medium-sized enterprises. In manufacturing as well as in retail, Japan has traditionally had a lot more small business than other OECD countries. This is now decreasing, as the population ages and business owners retire without heirs or proteges. But it still might point the way to the AI-enabled future.

AI creates leverage; it allows you to do more with a smaller team. For many businesses, the optimal size of this team will fall to only one person or a few people. Thus, I expect to see a lot of small companies sprout up, as people use AI agents to increase their productivity to the point where they only need a few employees (or even zero).

In other words, I expect AI to make the American labor system look a bit more like the Japanese labor system of the 1960s-2000s. There will be a bunch of generalists running around looking for things to do within their companies, a bunch of small businesspeople striking out on their own, and a few specialists with specific skills that still make them valuable. If you’re not one of the lucky few in the latter category, your choices will be to become a cog in an ever-changing corporate machine, or to strike out on your own and manage an AI “team” to sell some good or service directly to the consumer.

This might not be the most optimistic or enticing view of the future of work, especially to people who have lived their whole life thinking that their specific job skills are what made them valuable to society. But it’s probably better than humans becoming economically obsolete.

I swear there's not a econ/tech writer on the planet that has any idea what engineers actually do.

A software engineer is most importantly a *type of engineer*. And by and large engineers are people who define and solve problems. "Coding" is incidental to the job. It's like thinking mechanical engineers were all going to lose their jobs because CAD came along, after all, didn't they spend their days at a drafting table?

You keep mixing up the technological toolset of the job with, like, the actual substance of the job in this frustratingly reductive way.

I'm sympathetic, a bit, because there was this period of time where software engineers got a little high on their own supply and convinced themselves and everyone else that "coding" was this God-like skill set that was just *different* than everything else, and so I don't mind seeing them taken down a peg. But fundamentally coding was never engineering.

“Software engineers, for whom ‘writing code’ was a big part of the job description just a few months ago, are now mainly checkers and maintainers of code written by AIs.”

This gets a lot of press and as a software engineer it drives me a little crazy every time I see it. Even if we accept that the coding part has been or will be handled exclusively by AI, the engineer’s actual role hasn’t changed. The ability to competently produce working code is still in the job description and I would argue that it was never the most important part in the first place. Using AI doesn’t turn a software engineer into a “checker and maintainer of code written by AI” any more than pneumatic nail guns turned carpenters into checkers and maintainers of nailed together pieces of wood.