AI has the worst sales pitch I've ever seen

"Our product will make you economically useless, and possibly kill you" is not a value proposition.

“Hi. Do you have a moment? I’m from the Cursed Microwave company. Our product is much better than a traditional microwave. Not only can it automatically and perfectly cook all your food, it also microwaves your whole body, so you and your family are paralyzed and unable to ever work again. Don’t worry, though, because when everyone has a Cursed Microwave, our society will probably implement Universal Basic Income, and you and your children can just go on welfare! Oh, by the way, we estimate that there’s a 2 to 25 percent chance that our microwaves will put out so much radiation that they destroy the entire human race.”

If a door-to-door salesman gave me this pitch, I would gently see him out the door, and then quickly call the FBI.

But this is only a modestly exaggerated version of the pitch that the big AI labs — OpenAI and Anthropic — are making to the world about their technology!

Our product might kill your whole species

Let’s start with the “destroy the entire human race” part. For reasons I’ll explain, I think this is actually the less dumb part of the pitch the AI labs are making, but it’s still wild to hear them say it.

Sam Altman, head of OpenAI, once told Mathias Döpfner that he believes the risk of human extinction from AI technology to be about 2%. More recently, he amended this to “big enough to take seriously”:

Back in 2016, Altman was considerably more alarmist:

Despite his leadership status, Altman says he remains concerned about the technology. “I prep for survival,” he said in a 2016 profile in the New Yorker, noting several possible disaster scenarios, including “A.I. that attacks us.”…“I have guns, gold, potassium iodide, antibiotics, batteries, water, gas masks from the Israeli Defense Force, and a big patch of land in Big Sur I can fly to,” he said.

Obviously, most human beings do not have big patches of land in Big Sur they can fly to, so it’s understandable why statements like this might cause alarm.

Anthropic’s Dario Amodei is even more apocalyptic. He has repeatedly stated that he believes there’s a 25% chance that AI dooms humanity, or that things “go really, really badly.” (One time he said 10% instead.)1 He has written a long essay, “The Adolescence of Technology”, explaining what he thinks these risks are. In addition to super-powered terrorism and fascism, the risks include autonomous godlike AI that decides to destroy or enslave humanity.

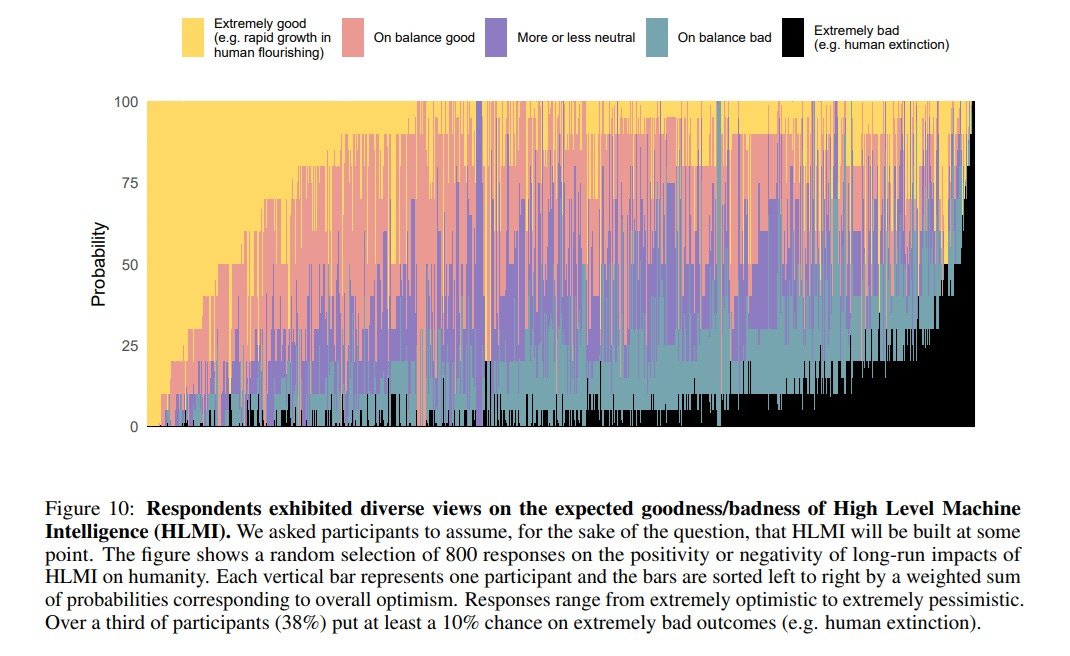

Dario is a bit more apocalyptic than the average person in the AI industry, but he’s not far out of the distribution. Here’s a chart of the responses of 800 published AI researchers on the question of AI’s impact, on a survey in 2023:

Presumably the left tail of the distribution consists mostly of AI safety researchers who are obsessed with the risks. But about a third of the researchers on this chart give a 10% or greater probability of human extinction or similar outcomes, and relatively few respondents give a number below 5%.

Let’s step back for a second and ask what seems like it should be a pretty basic question: Why on Earth would you make something that you thought had a 25% chance of wiping out your entire species? Or even a 5% chance? I don’t know about you, but to me that sounds like a pretty stupid thing to do!

In fact, I can think of two reasons to do it:

You think if you don’t do it, someone else will

You think if it doesn’t kill you, it’ll make you immortal

Let’s talk about the second of these, since it’s interesting, and I see almost no one talking about it. Throughout history, rich and powerful men have always sought out a technology that would grant them immortality, or at least vastly extended lifespan. Genghis Khan spent a good part of his later years searching for a sage to tell him the secret to eternal life. Modern rich and powerful people are no different, as evidenced by the large amounts of money thrown at highly speculative longevity startups.2

Now, with the potential advent of superintelligence, they’ve finally found a sage who actually might be able to give them the long-sought elixir. In his essay “Machines of Loving Grace”, Dario writes that the main upsides of AI are that it could radically accelerate progress in biotechnology and neurotechnology. He writes that this could make humans functionally immortal:

Doubling of the human lifespan. This might seem radical, but life expectancy increased almost 2x in the 20th century (from ~40 years to ~75), so it’s “on trend” that the “compressed 21st” would double it again to 150. Obviously the interventions involved in slowing the actual aging process will be different from those that were needed in the last century to prevent (mostly childhood) premature deaths from disease, but the magnitude of change is not unprecedented…[T]here already exist drugs that increase maximum lifespan in rats by 25-50% with limited ill effects. And some animals (e.g. some types of turtle) already live 200 years…Once human lifespan is 150, we may be able to reach “escape velocity”, buying enough time that most of those currently alive today will be able to live as long as they want, although there’s certainly no guarantee this is biologically possible.

A 25% chance of humanity dying is a lot. But from your personal perspective, the chance of personally dying within the next century, assuming no radical progress in longevity technology, is approximately 100%. So if the rest of the world didn’t matter to you, and it was either certain death in a few decades or a 25% chance of death in one decade with a 25% chance of eternal life, you might be willing to roll the dice.

Of course, most AI founders, including Dario, do care about the human race as a whole.3 They don’t just want to make themselves immortal; they’d like to make everyone else immortal too. From a certain perspective, this might be worth a roll of the dice on the whole future of the species.

But in fact, I don’t think immortality is the main reason the labs are pushing forward as hard and fast as they can with a technology they believe may kill us all. I think the first reason in my list — “If we don’t build it, someone else will” — is more important. Everyone at Anthropic and everyone at OpenAI knows that if they don’t build a superintelligent AI, Elon Musk will. Or the Chinese Communist Party will.4 And if that happens, our only futures are A) a machine god enslaved to the will of Elon Musk, B) a machine god enslaved to the will of the Chinese Communist Party, and C) an autonomous machine god that does whatever it feels like.

All three of those options sound bad. So despite their personal fears and reservations — and trust me, most of them do have a lot of personal fears and reservations about what they’re doing — they feel like they have no choice but to beat their less scrupulous competition to the finish line, in order to make sure that the machine-god-baby is raised with good values. I hear the term “Red Queen’s race” thrown around a lot in San Francisco these days. Few AI researchers would like to abandon the technology, but a lot would like to slow down or even pause its development, to give them more time to work on minimizing the dangers.

But that’s easier said than done. Examples of technologies slowing down from a small group of leading researchers refusing to push the tech forward are extremely rare — in fact, I can only really find one example in history (the gain-of-function research pause after bird flu in the early 2010s). But AI research is a huge enterprise, and a voluntary pause that was widespread enough to make a difference presents an utterly impossible coordination problem.

If a voluntary pause is out, that leaves regulation, either at the national level or by international agreement. Dario has publicly called for greater regulation of AI, and Anthropic has spent a bunch of money lobbying for greater government control. Even Elon Musk has called for an AI pause in the past. These calls are often dismissed as companies shilling for government protection for their incumbent positions, but I think their fears are sincere.

This is why I think “our product may kill you” is by far the less insane part of the pitch the AI labs are making. In fact, it’s more like “Our version of our product is less likely to kill you, and if you support our call for greater regulation, the danger can be minimized.” Some of the scientists who invented recombinant DNA definitely thought there was a chance it could wipe out humanity, as did many of the scientists who invented nuclear technology. They raised the alarm and pushed for responsible regulation.

Right now, the AI founders who are more worried about existential risk — for example, Dario and Elon — have pushed harder for a pause than the ones like Sam Altman who think the risk is lower. And even Altman is putting lots of OpenAI’s money toward a foundation dedicated to studying and preventing the risks of AI. That’s all reasonably rational, and it will probably play well with the public.

I still think this pitch could be greatly improved, though. Humans have an unfortunate tendency not to recognize risks before disasters actually happen — as an example, we didn’t treat fertilizer as a terrorism risk until Timothy McVeigh blew up a building with it, even though the chemistry of how to make a fertilizer bomb was widely known. Right now, everyone has seen Terminator and The Matrix, but no one thinks they’re real.

If the AI safety pitch is “superintelligence might kill us all”, we’re kind of screwed, because people won’t believe it until it happens, and then it’s too late. Instead, AI labs should focus their safety pitch on something regular people do believe in: terrorism. Talk about radicals using AI agents to vibe-code a super-Covid virus, and regular people’s ears might perk up, because that’s a danger that’s closer to things they’ve actually seen and experienced before.

But anyway, on to the second part of the AI pitch. This is the idea that AI is going to make humans economically obsolete. AI researchers and founders keep running around saying this, and I think it’s a huge own goal.